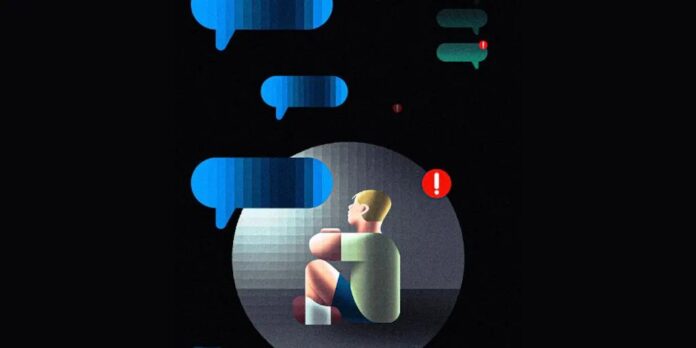

Schools are increasingly turning to artificial intelligence to address a growing mental health crisis among students. Faced with shrinking budgets and overwhelmed counseling staff, districts like Putnam County, Florida, are deploying AI platforms that scan student chats for warning signs of self-harm or distress. The goal? To intervene before crises escalate.

These systems, such as Alongside, flag concerning language and alert counselors like Brittani Phillips. She recounts an instance where an AI alert led her to a potentially life-saving intervention with an eighth-grader. The platform’s proponents argue it provides better access to mental health resources, particularly in understaffed rural schools. But experts and families are growing wary of teens over-relying on AI for emotional support.

The Rise of Digital Confidantes

One key reason students confide in AI is its perceived lack of judgment. Unlike a human counselor, a chatbot doesn’t observe facial expressions or body language—elements that can make adolescents anxious. For a generation raised on instant messaging, AI interfaces feel familiar and accessible. Students often find it easier to text their problems to a bot than to schedule an appointment or speak face-to-face.

Sarah Caliboso-Soto, a clinical social worker at USC, acknowledges AI’s potential as a “first line of defense,” regularly checking in with students and directing them to professional help when needed. However, she warns against replacing human interaction entirely. AI lacks the nuanced discernment of a trained clinician, who can interpret subtle cues and provide more informed guidance.

The Price of Automation

Alongside’s services start at roughly $10 per student annually, making it an affordable option for resource-strapped schools. Yet, experts caution against overdependence. The technology can miss critical emotional cues and may even reinforce unrealistic positivity, potentially hindering genuine progress.

Moreover, these AI platforms raise privacy concerns. Unlike licensed therapists, chatbots don’t always adhere to the same confidentiality standards. In some cases, alerts can trigger law enforcement involvement, as Phillips admits doing when a student expressed suicidal thoughts.

Beyond the Algorithm

Critics argue that AI tools address a symptom rather than the root cause: widespread loneliness and social disconnection. Sam Hiner, director of The Young People’s Alliance, believes that tech platforms often exacerbate isolation by offering a “crutch” instead of fostering real community. He fears “parasocial relationships,” where students develop one-sided emotional attachments to AI, further eroding their social skills.

One overlooked issue is the potential for manipulation. Some students test the limits of these systems by typing provocative statements (“My uncle touches me”) to gauge whether anyone is listening. Phillips has observed this behavior, noting that some boys simply want to see if anyone cares.

The Human Element Remains Essential

While AI can triage cases and free up counselors’ time, experts agree it should not replace human connection. The technology’s true value lies in augmenting, not substituting, clinical judgment. As Phillips points out, the key to building trust with students is showing them that a real person is monitoring the system and genuinely cares.

Ultimately, AI in schools is a double-edged sword. It can provide much-needed support, but only if implemented responsibly, with human oversight, and a clear understanding that technology cannot replace the empathy and critical thinking of trained professionals.